Students in CSSE tackle AI image editing questions, present research at conference

Published: Mar 25, 2026 2:15 PM

By Joe McAdory

CSSE students Brandon Collins, left, and Logan Bolton presented their research March 6-10 at the Winter Conference on Applications of Computer Vision in Tuscon, Arizona.

CSSE students Brandon Collins, left, and Logan Bolton presented their research March 6-10 at the Winter Conference on Applications of Computer Vision in Tuscon, Arizona.

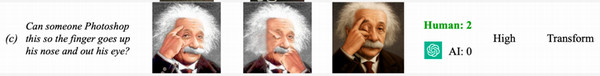

Have you ever asked an artificial intelligence (AI) tool to replace a shirt color in a photograph, but it changed the subject’s face? What about prompting the tool to remove a small object from an image, only to have AI erase part of the background? Frustrating, isn’t it.

Computer Science and Software Engineering (CSSE) students Brandon Collins and Logan Bolton wanted to better understand AI’s struggles with simple photo edits — so they built a full research project around it. They presented their findings, “Real-World Image Editing with AI: A Study of User Requests and Model Performance,” March 6–10 at the Winter Conference on Applications of Computer Vision (WACV) in Tucson.

“In this work, we wanted to study how successful AI models are at fulfilling edit requests from real users,” said Collins, a junior looking forward to a summer internship at Goldman Sachs’ global banking and markets division in New York City. “We wanted to see which kinds of edits are better fulfilled by users through tools like Photoshop versus AI models. To do this, we categorized edit requests by subject (what's being edited), action (delete, move, recolor, etc.), and creativity (how open-ended the request is).”

Bolton said he and Collins worked directly with researchers from Adobe to understand how much Photoshop can be automated by AI.

“Most research studying the effectiveness of AI for image editing relies on academic benchmarks that don't reflect what real users want,” he said. “We wanted to change that by basing our study on real-world editing requests.”

Their analysis showed that AI image editing models struggled most with small, targeted edits — the kinds of precise changes users’ request. These issues often appeared as distortions to fine details, especially in areas the user never intended to change.

“Sometimes it’s a localization error,” Collins said. “A user may ask the color of a shirt to be changed which the model may do but it may also edit the nearby face. Some models require a user to draw a mask in the region they want edited which mitigates this error.”

Edits involving people and pets were error prone, with models frequently altering facial identity even when the request had nothing to do with the face.

“We found that users are extremely sensitive to even the slightest changes in images involving human faces,” Bolton said. “We're wired to pick up on tiny details in faces in a way that we just don't with other objects. AI models don't seem to have that same level of sensitivity yet.”

In contrast, the models performed best on high‑creativity, open‑ended edits that required broad, image‑level changes rather than targeted adjustments.

“Surprisingly, we found that users preferred the AI-generated results for edit requests requiring large amounts of creativity, like ‘make this image look really crazy!’” Bolton said. “On the other hand, the AI models struggled more with seemingly straightforward requests such as changing text in an image.”

Both students are under the mentorship of CSSE Associate Professor Anh Nguyen, whose lab group is focused on trustworthy and explainable AI.

“The findings by Brandon, Logan and the team are having a big community-wide impact as the image editing AIs are increasingly popular, from Gemini, GPT to Grok,” Nguyen said. “Yet, these AI systems all have fundamental limitations that we discovered.”

Presenting their work poster-style on a national stage for two hours among roughly 1,000 researchers from universities and industry was special.

“Brandon and I are pretty sure we were the only undergraduates presenting,” Bolton said. “People were genuinely surprised when they talked to us and realized we weren't in graduate school. I'm very passionate about research and I loved being able to meet and exchange ideas with other computer vision researchers in the field. It was a great feeling to represent Auburn University at that level and show that undergraduates here can contribute meaningful work to the research community.”

This was Collins’ first research paper. So far, so good.

“I wasn’t sure how much I would contribute since I was new, but I was happy to work on both the experiments and writing,” he said. “I was happy to represent Auburn University and show the work we can contribute, and it was great to talk with students at other universities and share experiences.”

Media Contact: , jem0040@auburn.edu, 334.844.3447